- Special Issues-08-02-2019

- Volume 34

- Issue 8

A Short Guide for Raman Spectroscopy of Eukaryotic Cells

Raman spectroscopy has become a highly popular and powerful approach to conduct label-free assessment of molecular information of biological and clinical samples (1,2). The Raman method is based on an inelastic scattering between a photon and a molecule, exciting molecular vibrations, and providing in this way the molecular information of a sample in a label-free and nondestructive manner (3). Although the quality of informational is exceptional, the application of this method for the characterization of eukaryotic cells requires significant know-how, starting with the right choice of instrumentation, as well as the method of data preprocessing and analysis. Here, we provide a brief outline of what to consider for the application of Raman spectroscopy for the characterization of eukaryotic cells.

Select the Right Instruments for Your Task to Get the Best Outcome

The requirements for biomedical Raman instrumentation for the label-free characterization of eukaryotic cells are significantly more demanding in comparison to typical applications found in industrial processing, such as pharmaceutical authentication. Besides the small volume of a eukaryotic cell, most intracellular macromolecules, such as proteins, carbohydrates, and nucleic acids are present at low molecular concentrations. Furthermore, cells or the molecules they contain can exhibit additional fluorescence signals, whose Raman signal can also become resonantly enhanced, obscuring important intracellular differences. Thus, the choice of the correct excitation wavelength is an important one. The most common excitation laser wavelengths for Raman spectroscopy of eukaryotic cells are 532 nm and 785 nm. While the 532 nm excitation wavelength has a 4.74-times higher signal intensity, when compared with the 785 nm excitation wavelength, it also is more prone to excite autofluorescence, and also to resonantly excite molecules. Moreover, this wavelength is also more likely to cause intracellular damage in living cells. For dried cells, the 532 nm excitation wavelength can also result in burning of the sample, even at moderate excitation power. Despite the reduced scattering efficiency, 785 nm is frequently a better choice. The choice of the spectrometer, and especially the charge-coupled device (CCD), is intrinsically constrained by the choice of the excitation wavelength. The CCD detector is the most crucial component in any Raman setup, because it most-heavily taxes the signal-to-noise ratio (S/N), and, as such, the performance. Besides the obvious property of quantum efficiency, which defines the ratio of photon conversion, factors such as signal gain, dark current, and readout noise have to be considered to enable satisfactory results in the analysis of cells. For a 785 nm excitation, the etaloning suppression is of significant importance, and deep-depletion CCDs, which come at a higher cost, are required.

Data Calibration

The proper data calibration strategy has always been of highest importance, specifically when data have to be matched between different devices and different data acquisition conditions; for example, for different excitation wavelengths, and for different spectral resolution. Usually, calibration refers to a calibration of the wavenumber axis, and the correction for the optical system transfer function. For the wavelength calibration, two common methods are used, based on the measurement of a reference standard; for example, polystyrene or 4-acetaminophenol, or based on the measurement of an atomic reference emission source (neon lamp). In either case, the measured peak positions, which are detected on different pixels of the CCD camera, are matched to the wavenumber positions of a reference spectrum of this substance. Points in between are interpolated, using an n-order polynomial function. The intensity calibration is typically performed using a National Institute of Standards and Technology (NIST) reference material, or a referenced white light emission lamp, both with known emission profiles (4). Here, a reference spectrum is acquired, and a transfer function calculated, based on the known emission profile of the emitter. Each measured spectrum is then corrected, using the calculated system response function.

Correctly Design Your Experiments

The experimental design is key to extract the necessary information for a given problem. Most frequently, Raman experiments of eukaryotic cells are performed in imaging mode, which enables the visualization of the distribution of the macromolecular content, and also helps to capture all of the intracellular variation of a cell. This, however, is very time consuming. Typical acquisition times for individual spectra are on the order of 1 to 2 s, which results in an acquisition time of 26 to 52 min for an entire cell at diffraction limited resolution, resulting in a very limited number of sampled cells per day. While the information on the distribution of cells can be alluring, most frequently researchers tend to take the average spectra of the cells for further analysis, ending up with a limited number of cells, which can reduce the statistical meaning of the results. When imaging information is not required, it is highly advisable to sample a large number of cells with only one or a few spectra per cell. One always has the option to acquire individual spectra of cells, which capture the required information of the cells. One has to keep in mind that intracellular changes are often larger than the cell type or cell stage differences. Hence, it is important to acquire either multiple Raman spectra of a cell and use the average of these measurements, or as we have shown previously, to acquire integrated Raman spectra of the cells (5). There are two options to do that-either by expanding the beam diameter, or by rapidly scanning a diffraction limited spot over the cell. The advantage of the latter approach is that the sampling size can be chosen for each cell individually, and can be selected from a few micrometers up to nearly 100 µm, which is not dynamically possible with an extended beam approach. Another linked question, which has to go into the experimental design, is the required sample size. There are several publications that deal with this very crucial aspect. As mentioned in the previous point, proper experimental planning includes data-size planning, which specifically depends on the problem at hand and the effect that is to be measured (6).

Data Preprocessing is the Key

There are multiple steps that have to be performed to remove artifacts, such as cosmic spikes, unwanted background contributions from auto-fluorescence, and laser scattering. Furthermore, as outlined in the paragraph "Data Calibration," the x-axis has to be calibrated, and the data have to be corrected, for the system response function. Furthermore, depending on the S/N of the signal, a denoising of the data can be advisable. The order of these processes is highly important, because it can not only affect the performance of the algorithms, but can also result in the generation of artifacts. The common preprocessing order is:

1) wavenumber calibration

2) dark current correction

3) cosmic spike removal

4) calibration for system transfer function

5) background correction

6) denoising

Of course, this outline is only a guide, and variations or additional steps may be required to improve the specific preprocessing needs, especially when dealing with very complex background contributions. To perform the different steps, particularly steps 3, 5, and 6, a variety of algorithms are available and required. For example, for the background correction step, methods such as iterative polynomial background correction, asymmetric least squares fitting, and extended multiplicative scattering correction (EMSC) are frequently used (7). EMSC has proven especially powerful, because it considers prior knowledge of background components and pure components, and additionally uses polynomial fitting to remove the background contributions. To perform the denoising frequently Savitzky-Golay filtering, the Whittaker Smoother, or a single value decomposition (SVD) can be performed. An example for raw spectra and background corrected spectra are shown in Figure 1.

Figure 1: The raw Raman spectra of eukaryotic cells contain a significant number of background contributions and artifacts. Before any analysis of the spectra can be completed, a significant number of steps have to be performed to extract the molecular information of the cells. (a) Uncorrected cell spectra during acquisition; (b) typical cell spectra after quartz correction and intensity calibration; (c) typical cell final spectra following quartz correction, intensity calibration, background correction, cosmic ray removal, de-noising, cropping, and normalization.

Data Analysis and Evaluation

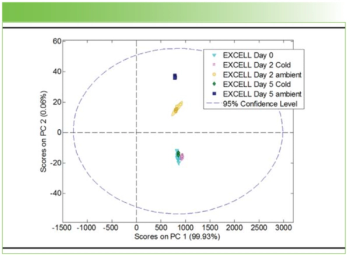

Once the data have been sufficiently corrected, as outlined in the previous paragraph, data analysis can take place. Depending on the task at hand and the specific experimental question, a variety of suitable approaches are available. The most obvious one is a simple visual inspection of the data and visual comparison between the spectra of the different groups. The assessment can be done by simply calculating a difference spectrum between the relevant groups. Comparison of band positions and band intensities offers a simple method to understand the spectral differences. Since the Raman signal is linearly dependent on the concentration, which is the number of molecules in the focal volume, one may perform binary concentration series experiments with nonoverlapping bands; then relevant band intensities can be plotted against the concentration for a semiquantitative analysis. Nowadays, those simple approaches are rarely used, and researchers heavily relay on multivariate statistical analysis and machine learning approaches. One has to keep in mind that a Raman spectrum provides information on the vibration of molecular bonds in a sample, and the same molecular bonds can exhibit different molecular vibration, providing correlated information. This is also true for most intracellular macromolecules, such as nucleic acids, proteins, and lipids. Here, changes in a specific band will also frequently be indicative of changes in other bands of the macromolecule. In essence, this means that Raman spectra contain a lot of redundant information, that is, different spectral bands that describe the same type of information. As such, dimension reduction techniques are heavily used in Raman spectroscopy. Depending on the specific application, which could be, for example, of class differentiation or concentration series, different methods can be used. For dimensionality reduction in a class-differentiation problem, one of the most commonly used methods is principal component analysis (PCA), which decomposes data in new and ordered orthogonal components, where the first component explains the highest variance of the data set, the second component explains the second highest variance, and so on (Figure 2). Once the dimensionality is reduced, then greater than 95% of the variance in the data set can readily be explained by the first 15 principal components-the calculated score values are used for further analysis. The dimensionality of the initial spectrum, which usually contains more than 1000 variables, is now reduced to the defined number of components; for example, 15. This is still challenging for a visual inspection; hence, the additional methods are required. Here, well known approaches from machine-learning, such as support vectors machines (SVM), linear discriminant analysis (LDA), or random-forest classification (RFC) are used.

Figure 2: The analysis of the Raman data is a highly complex process and requires a good amount of knowledge in multivariate statistical analysis and machine-learning approaches. PCA is a frequent choice for the dimensionality reduction and allows the user to assess the relevant information of the data in a comprehensible way. (a) The scatter plot matrix of the score values shows that Raman spectra of different cell types create distinct clusters. (b) The PCA-loadings allow the assessment of the spectral information responsible for the differences. (c) The image of the score information enables the visualization of the distribution of the molecular information.

Acknowledgment

Financial support of the EU, the Thüringer Ministerium für Wirtschaft, Wissenschaft und Digitale Gesellschaft, the Thüringer Aufbaubank, the Federal Ministry of Education and Research, Germany (BMBF), the German Science Foundation, the Fonds der Chemischen Industrie and the Carl-Zeiss Foundation are greatly acknowledged.

References

(1) C. Krafft, I.W. Schie, T. Meyer, and J. Popp, Chem. Soc. Rev. 45(7), 1819–1849. (2016). doi: 10.1039/C5CS00564G.

(2) C. Krafft, M. Schmitt, I.W. Schie, D. Cialla-May, C. Matthäus, T. Bocklitz, and J. Popp, Angewandte Chemie International Edition,56(16), 4392–4430 (2017).

(3) I.W. Schie and T. Huser, Appl. Spectrosc. 67(8), 813–828 (2013).

(4) S.J. Choquette, E.S. Etz, W.S. Hurst, D.H. Blackburn, and S. Leigh, Appl. Spectrosc. 61(2), 117–129 (2007).

(5) I.W. Schie, R. Kiselev, C. Krafft, and J. Popp, Analyst 141, 6387–6395 (2016).

(6) C. Beleites, U. Neugebauer, T. Bocklitz, C. Krafft, and J.Popp, Anal. Chim. Acta 760, 25–33 (2013).

(7) E. Cordero, F. Korinth, C. Stiebing, C. Krafft, I.W. Schie, and J. Popp, Sensors 17(8), 1724 (2017).

Iwan W. Schie and Jürgen Popp are with the Leibniz Institute of Photonic Technology, in Jena, Germany. Jürgen Popp is also with the Institute of Physical Chemistry and Abbe Center of Photonics at Friedrich Schiller University, in Jena, Germany. Direct correspondence to:

Articles in this issue

over 6 years ago

Vol 34 No 8 Spectroscopy, August 2019, The Resource Issue PDFover 6 years ago

Seven Common Errors to Avoid in LIBS Analysisover 6 years ago

Seven Essential Steps for In Situ Reaction Monitoringover 6 years ago

ICP-MS: Essential Steps to Optimize Matrix Toleranceover 6 years ago

Spectroscopy Software/Computer Hardware/Automation Productsover 6 years ago

Spectroscopic Instrumentation: Spectrometer Systemsover 6 years ago

Sampling/Sample HandlingNewsletter

Get essential updates on the latest spectroscopy technologies, regulatory standards, and best practices—subscribe today to Spectroscopy.